What is Long Short-Term Memory ?

LONG SHORT - TERM MEMORY (LSTM) ???

I think I saw this term somewhere 🤔... Maybe in one of the previous articles 😕.

That's right !! We saw it when we learned about recurrent neural networks (RNNs).

In LSTM networks, the output from the previous phase is sent into the current step as input. LSTM is designed by Hochreiter & Schmidhuber. It addressed the issue of long-term RNN dependency, in which the RNN can predict words from current data but cannot predict words held in long-term memory. RNN's performance becomes less effective as the gap length rises. By default, LSTM may save the data for a very long time. It is utilized for time-series data processing, forecasting, and classification.

LSTM is a type of RNN which are specially designed to handle sequential data, including time series, speech, and text. LSTM networks are particularly suited for applications like language translation, speech recognition, and time series forecasting because they can learn long-term relationships in sequential data.

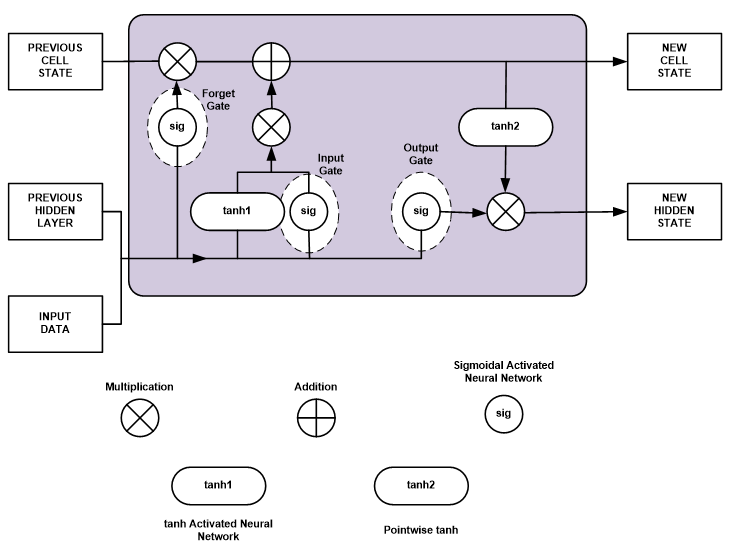

Since there is just one hidden state in a typical RNN, learning long-term dependencies might be challenging for the network. This issue is solved by LSTMs by including memory cells, which are containers that can store data for a long time. The memory cells are classified as the input gate, the forget gate, and the output gate. These gates determine what data should be the input and output of the memory cell. The input and forget gates control the information added to and removed from the memory cell. Additionally, the output gate regulates the output of the memory cell. This enables LSTM networks to learn long-term dependencies by deciding which information to keep or discard as it moves through the network.

Deep LSTM networks can recognize even more intricate patterns in sequential data and can be designed by stacking LSTMs. Convolutional Neural Networks (CNNs) are a type of neural network design that may be used with LSTMs to analyze images and videos.

Hope you guys like the article. Stay tuned and keep supporting 😊. Kindly give your valuable suggestions in the comments section 🙏.

Akshay Juneja authored 20+ articles for INFO4EEE Website on Deep Learning.

Post a Comment